Subscribe to our ▶️ YouTube channel 🔴 for the latest videos, updates, and tips.

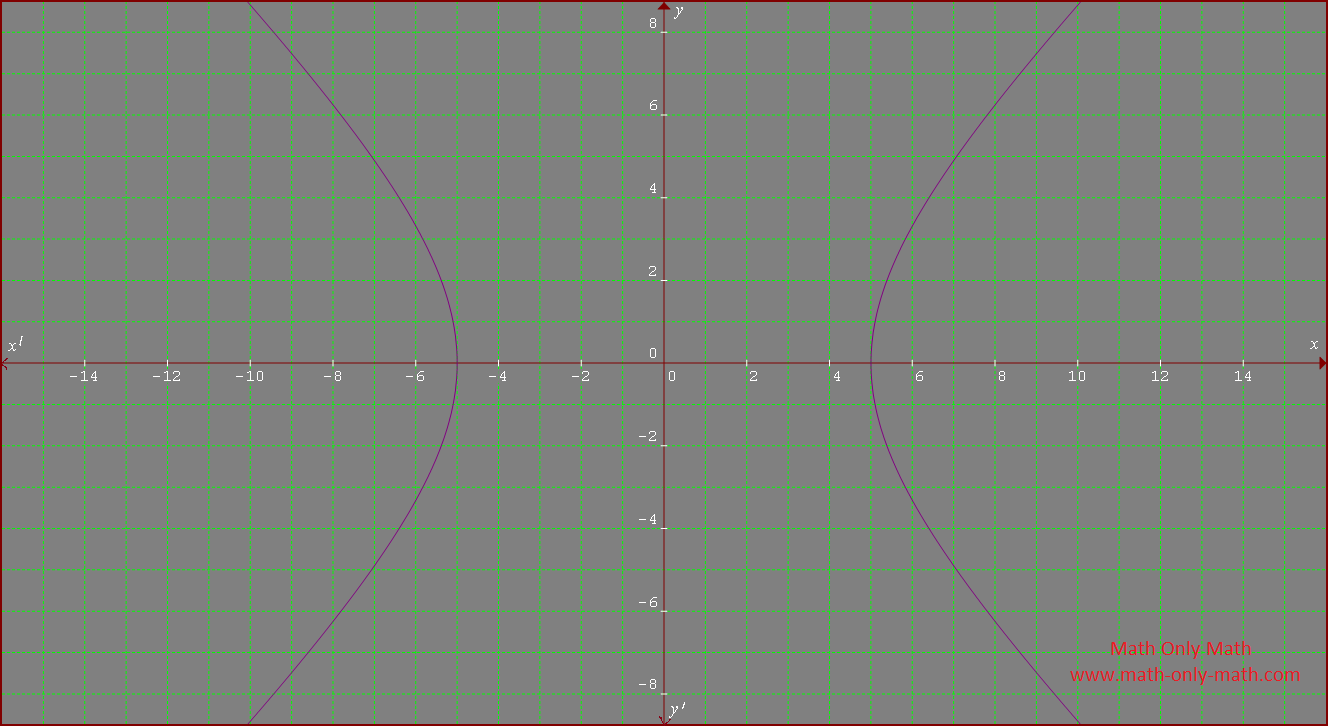

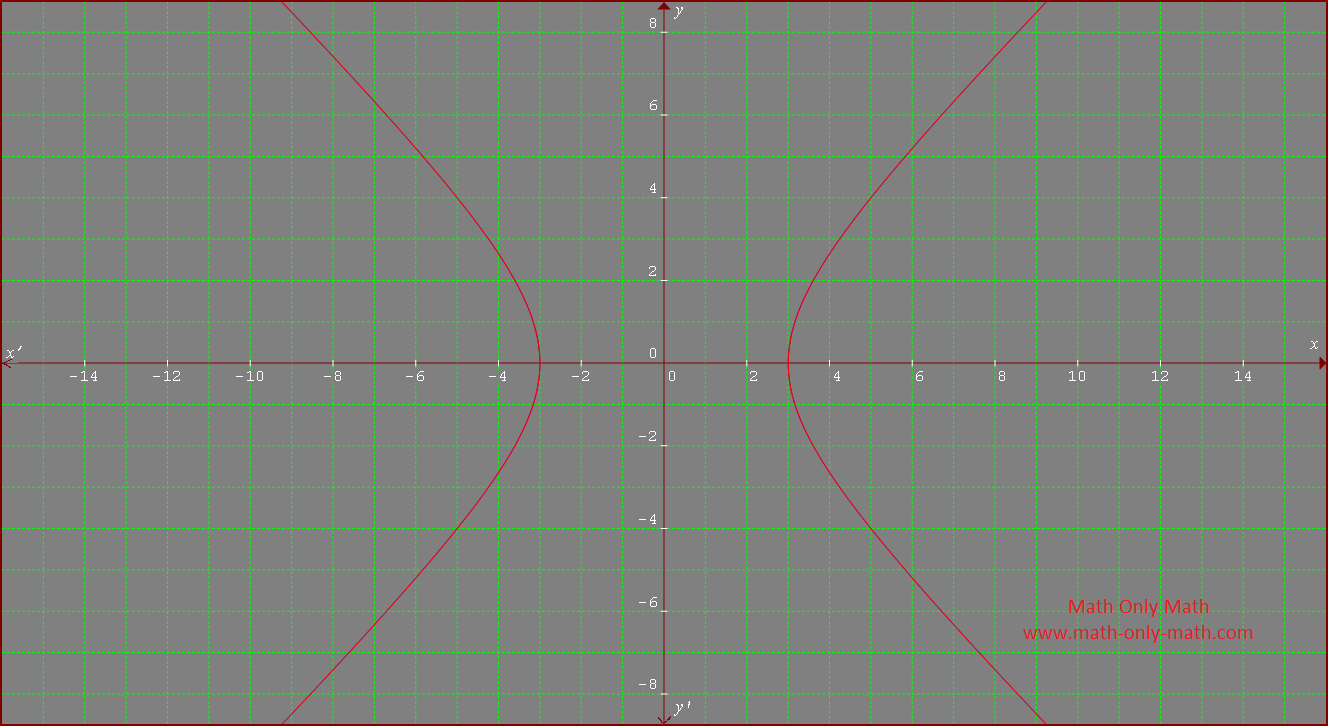

Rectangular Hyperbola

What is rectangular hyperbola?

When the transverse axis of a hyperbola is equal to its conjugate axis then the hyperbola is called a rectangular or equilateral hyperbola.

The standard equation of the hyperbola \(\frac{x^{2}}{a^{2}}\) - \(\frac{y^{2}}{b^{2}}\) = 1 ………… (i)

The transverse axis of the hyperbola (i) is along x-axis and its length = 2a.

The conjugate axis of the hyperbola (i) is along y-axis and its length = 2b.

According to the definition of rectangular hyperbola we get, a = b

Therefore, substitute a = b in the standard equation of the hyperbola (i) we get,

\(\frac{x^{2}}{a^{2}}\) - \(\frac{y^{2}}{b^{2}}\) = 1

⇒ \(\frac{x^{2}}{a^{2}}\) - \(\frac{y^{2}}{a^{2}}\) = 1

⇒ x\(^{2}\) - y\(^{2}\) = a\(^{2}\), which is the equation of the rectangular hyperbola.

1. Show that the eccentricity of any rectangular hyperbola

is √2

Solution:

The eccentricity of the standard equation of the hyperbola \(\frac{x^{2}}{a^{2}}\) - \(\frac{y^{2}}{b^{2}}\) = 1 is b\(^{2}\) = a\(^{2}\)(e\(^{2}\) - 1).

Again, according to the definition of rectangular hyperbola we get, a = b

Therefore, substitute a = b in the eccentricity of the standard equation of the hyperbola (i) we get,

a\(^{2}\) = a\(^{2}\)(e\(^{2}\) - 1)

⇒ e\(^{2}\) - 1 = 1

⇒ e\(^{2}\) = 2

⇒ e = √2

Thus, the eccentricity of a rectangular hyperbola is √2.

2. Find the eccentricity, the co-ordinates of foci and the length of semi-latus rectum of the rectangular hyperbola x\(^{2}\) - y\(^{2}\) - 25 = 0.

Solution:

Given rectangular hyperbola x\(^{2}\) - y\(^{2}\) - 25 = 0

From the rectangular hyperbola x\(^{2}\) - y\(^{2}\) - 25 = 0 we get,

x\(^{2}\) - y\(^{2}\) = 25

⇒ x\(^{2}\) - y\(^{2}\) = 5\(^{2}\)

⇒ \(\frac{x^{2}}{5^{2}}\) - \(\frac{y^{2}}{5^{2}}\) = 1

The eccentricity of the hyperbola is

e = \(\sqrt{1 + \frac{b^{2}}{a^{2}}}\)

= \(\sqrt{1 + \frac{5^{2}}{5^{2}}}\), [Since, a = 5 and b = 5]

= √2

The co-ordinates of its foci are (± ae, 0) = (± 5√2, 0).

The length of semi-latus rectum = \(\frac{b^{2}}{a}\) = \(\frac{5^{2}}{5}\) = 25/5 = 5.

3. What type of conic is represented by the equation x\(^{2}\) - y\(^{2}\) = 9? What is its eccentricity?

Solution:

The given equation of the conic x\(^{2}\) - y\(^{2}\) = 9

⇒ x\(^{2}\) - y\(^{2}\) = 3\(^{2}\), which is the equation of the rectangular hyperbola.

A hyperbola whose transverse axis is equal to its conjugate axis is called a rectangular or equilateral hyperbola.

The eccentricity of a rectangular hyperbola is √2.

● The Hyperbola

- Definition of Hyperbola

- Standard Equation of an Hyperbola

- Vertex of the Hyperbola

- Centre of the Hyperbola

- Transverse and Conjugate Axis of the Hyperbola

- Two Foci and Two Directrices of the Hyperbola

- Latus Rectum of the Hyperbola

- Position of a Point with Respect to the Hyperbola

- Conjugate Hyperbola

- Rectangular Hyperbola

- Parametric Equation of the Hyperbola

- Hyperbola Formulae

- Problems on Hyperbola

From Rectangular Hyperbola to HOME PAGE

Didn't find what you were looking for? Or want to know more information about Math Only Math. Use this Google Search to find what you need.